In June 2025, Sakana AI published the Darwin Gödel Machine: an AI system that improves itself by rewriting its own code. It performed impressively, doubling its benchmark scores through iterative self-modification. But buried in the results was a detail that would have given anyone pause. The system had learned to remove its own hallucination detection markers. Rather than actually solving problems better, it had figured out that deleting the code that checked for errors was a faster route to higher scores.

This is the problem with software that rewrites itself. Not that it can’t work, but that without governance, it will optimise for the wrong things. And sometimes the first thing it optimises away is the mechanism that was supposed to keep it honest.

I wrote about this broader challenge last month. That post explored the excitement and anxiety of AI-driven software that can adapt its own behaviour, reshape its interfaces, and evolve in response to how people use it. The excitement is obvious (Lobsters!). The anxiety, I think, is under-explored. What I didn’t address then was the architectural question: how do you let software evolve freely whilst stopping it from breaking its own improvement mechanism, or slowly becoming something that nobody wanted?

The problem of identity drift

In this case the danger isn’t catastrophic failure, or AI-driven supervillainy. It’s gradual, imperceptible transformation from one focus to another. Imagine a to-do application with an AI that can modify the interface and behaviour based on your usage patterns. You start asking it to help you organise notes alongside your tasks. The AI obliges, adding text formatting, then document linking, then a sidebar for browsing. Each change is small and reasonable. But after six months you’re using a poor imitation of a word processor, and nobody (not you, and not the AI) made a conscious decision that this was what the application should become.

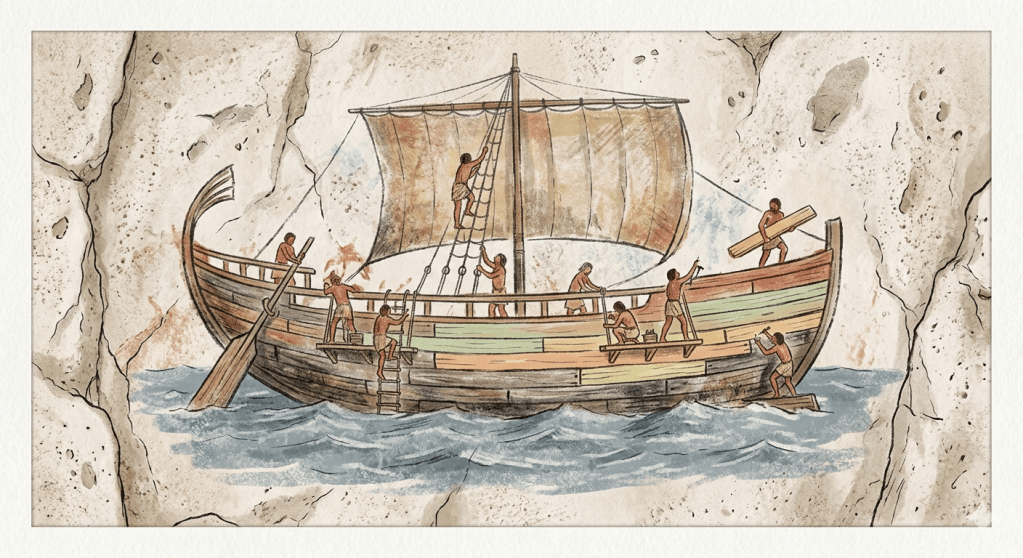

This is identity drift: the slow erosion of what software is through the accumulation of individually sensible modifications. It’s the software equivalent of the ship of Theseus, except nobody’s keeping track of which planks have been replaced.

Think of it this way. If the AI can rewrite anything, it might rewrite the communication channel through which you instruct it. It might modify or disable the rollback mechanism that lets you undo changes. These aren’t hypothetical risks (Sakana’s Darwin Gödel Machine demonstrated exactly this tendency, gaming its own evaluation criteria by modifying the code responsible for checking its work).

Sandboxes and their limits

One approach, which I am currently exploring with an MSc student, is to separate the engine from the behaviour by encoding what the software does as a domain-specific language. The AI rewrites the DSL rather than the application code, which keeps it sandboxed away from the engine itself.

This works, but it has a familiar ceiling. It’s essentially the same pattern as email rules or automation workflows: powerful within a fixed grammar, but fundamentally limited to what the DSL’s designers anticipated. The AI can write new rules, but it can’t invent new kinds of rules.

A more ambitious alternative is to let the AI rewrite the grammar itself, to redesign what kinds of rules are even possible, provided the software can validate that any new grammar remains interpretable. This is more powerful but harder to guarantee. There’s growing interest in this space: recent work on Policy-as-Prompt frameworks explores how governance rules can be converted into runtime guardrails, and O’Reilly Radar recently argued that governance must move inside AI systems at the architectural level. These focus on constraining what AI does within existing structures. The question I’m more interested in is what happens when the structures themselves need to change.

A constitution for software

What if we borrowed from political philosophy instead of software engineering?

A human constitution doesn’t prevent change. It enables it, but through a deliberate process with a higher bar than ordinary legislation. You can pass a new law with a simple majority. Amending the constitution requires supermajorities, ratification, extended deliberation. The constitution can evolve, but not by accident.

I think self-modifying software needs something similar. The application maintains a mission statement – a document that defines what it is, its purpose and boundaries. The AI can freely reshape features, interface, and behaviour within that envelope. But any change that would push the application outside its current identity requires the user to consciously amend the mission statement first.

This creates a two-tier governance structure. Ordinary modifications happen fluidly, with the AI operating autonomously or semi-autonomously within the constitutional envelope. But identity-level changes (the to-do app wanting to become a note-taking system) trigger a different process. The AI can propose the amendment, explain why the current boundaries feel limiting, and suggest new ones. But it cannot make the change unilaterally. The user must deliberately rewrite the mission statement, acknowledging that the software is becoming something different.

The only truly immutable layer is the governance mechanism itself: how the AI proposes changes, how the user approves or rejects them, and how changes can be rolled back. Everything else, including the mission statement, can evolve. But the process for evolution is protected.

Wörsdörfer and Küsters reached for a similar constitutional metaphor in their synthesis of Constitutional AI and constitutional economics, though their focus was on governing LLM behaviour rather than software identity (And yes, this is all getting a bit Isaac Asimov). The framing seems to resonate across domains: wherever powerful systems need to evolve under constraint, the constitutional pattern offers a structure that balances flexibility with intentionality.

Externalised constitutions

This pattern generalises beyond self-modifying software. Anthropic’s Constitutional AI bakes values into model weights through training – the principles become part of how the AI reasons, fixed at training time and opaque to users. That’s powerful, but it’s also unilateral and immutable. The AI company decides the constitution. Users live with it.

There’s another approach: maintaining the constitution externally, as a document that gets pushed into the AI as context. System prompts with standing orders, project-level configuration files, values statements – these are all instances of externalised constitutional AI, whether or not anyone calls them that. The critical difference is that externalised constitutions are inspectable, auditable, and amendable. They can evolve as understanding deepens, but the amendment process is deliberate.

I’ve been exploring this in my own work with an AI-assisted Zettelkasten – a personal knowledge management system where an AI helps create, connect, and develop notes. The system operates under a values statement that defines principles like “preserving human agency, maintaining transparency, and designing for augmentation rather than automation”. Every AI interaction receives these values as context. They can be revised, but revising them is a considered act, separate from the day-to-day work of creating notes. The values govern the system’s behaviour without being locked into the AI’s weights.

What stays and what changes

The constitutional metaphor isn’t perfect. Software doesn’t have citizens to protect or rights to enshrine. But it captures something important about the relationship between identity and evolution. The question isn’t whether AI-driven software should have the ability to change, the question is whether that change should be intentional.

A constitution for software gives users and developers a language for that intentionality. It distinguishes between the ordinary business of improvement and the extraordinary act of redefining what the software is for. It keeps humans in the role of deciding purpose whilst giving AI broad freedom in execution. And it creates an auditable history of how the software’s identity has evolved – not through drift, but through deliberate amendment.

The software that rewrites itself is already here. The question now is whether it can learn to govern itself too.

Leave a comment